Our SIGGRAPH ’25 paper on super-large scale FLIP simulations with two-phases is online now. Bernhard’s solver is especially impressive given the fact that it’s all running on a single workstations, without GPU-support. The preview image above doesn’t do the simulations justice. Please enjoy the video in full-screen and high-quality via the link below:

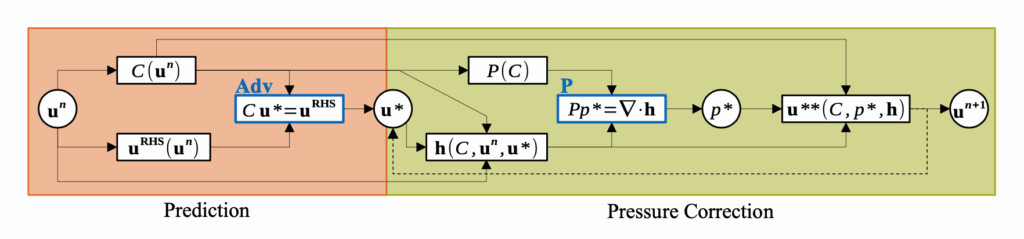

Full paper abstract: Capturing the visually compelling features of large-scale water phenomena,such as the spray clouds of crashing waves, stormy seas, or waterfalls, involves simulating not only the water but also the motion of the air interacting with it. However, current solutions in the visual effects industry still largely rely on single-phase solvers and non-physical “white-water” heuristics. To address these limitations, we present Phase-Field-FLIP (PF-FLIP), a hybrid Eulerian/Lagrangian method for the fully physics-based simulation of very large-scale, highly turbulent multiphase flows at high Reynolds numbers and high fluid density contrasts. PF-FLIP transports mass and momentum in a consistent, non-dissipative manner and, unlike most existing multiphase approaches, does not require a surface reconstruction step. Furthermore, we employ spatial adaptivity across all critical components of the simulation algorithm, including the pressure Poisson solver. We augment PF-FLIP with a dual multiresolution scheme that couples an efficient treeless adaptive grid with adaptive particles, along with a fast adaptive Poisson solver tailored for high-density-contrast multiphase flows. Our method enables the simulation of two-phase flow scenarios with a level of physical realism and detail previously unattainable in graphics, supporting billions of particles and adaptive 3D resolutions with thousands of grid cells per dimension on a single workstation.