We’re happy to report that our paper “Reviving Autoencoder Pretraining” was finally published in Neural Computing and Applications Journal, and is available online now at https://rdcu.be/cYmbd.

In short, the paper targets learning features via a forward-backward pass that was inspired by our ping-pong loss from our TecoGAN paper. There we used it to self-supervise video sequences in terms of forward and backward dynamics, while the new paper uses it to train networks that are “as-invertible-as-possible“. Orginally, we tried to coin the term “racecar” loss, the word “racecar” being a nice palindrome to highlight the bidirectional nature.

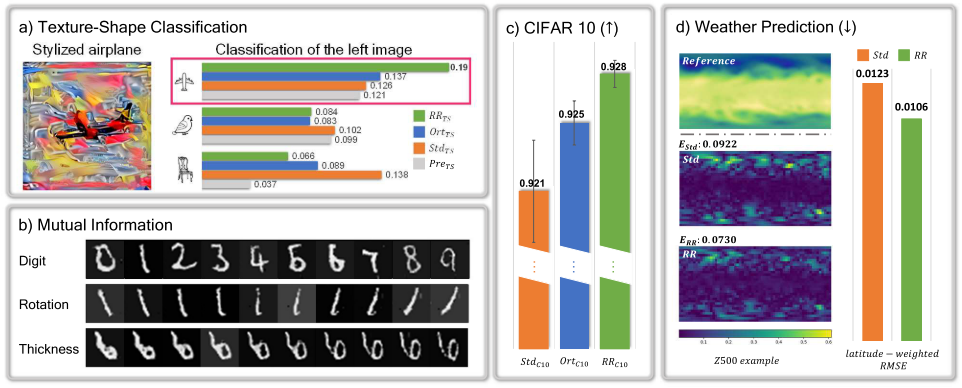

Abstract: The pressing need for pretraining algorithms has been diminished by numerous advances in terms of regularization, architectures, and optimizers. Despite this trend, we re-visit the classic idea of unsupervised autoencoder pretraining and propose a modified variant that relies on a full reverse pass trained in conjunction with a given training task. This yields networks that are {\em as-invertible-as-possible}, and share mutual information across all constrained layers. We additionally establish links between singular value decomposition and pretraining and show how it can be leveraged for gaining insights about the learned structures. Most importantly, we demonstrate that our approach yields an improved performance for a wide variety of relevant learning and transfer tasks ranging from fully connected networks over residual neural networks to generative adversarial networks. Our results demonstrate that unsupervised pretraining has not lost its practical relevance in today’s deep learning environment.

Further info can be found here.