We would like to share our latest work: AeroTransformer — a step toward bringing the foundation model paradigm to real-world aerodynamic design.

Code & models: https://github.com/tum-pbs/AeroTransformer

Paper: https://arxiv.org/abs/2604.18062

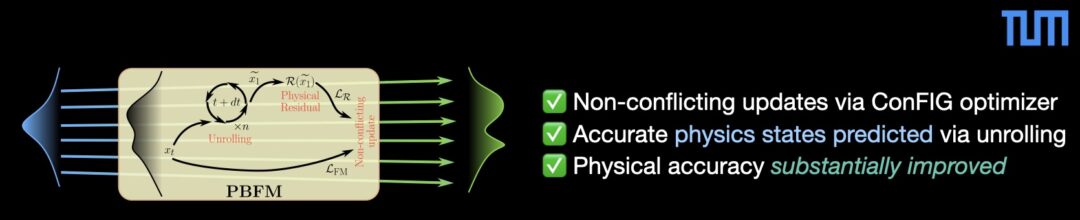

Modern engineering workflows still rely on expensive CFD simulations — especially in 3D, where generating training data is a major bottleneck. AeroTransformer tackles this by combining large-scale pretraining + efficient fine-tuning, enabling accurate predictions with drastically reduced data requirements. Key contributions:

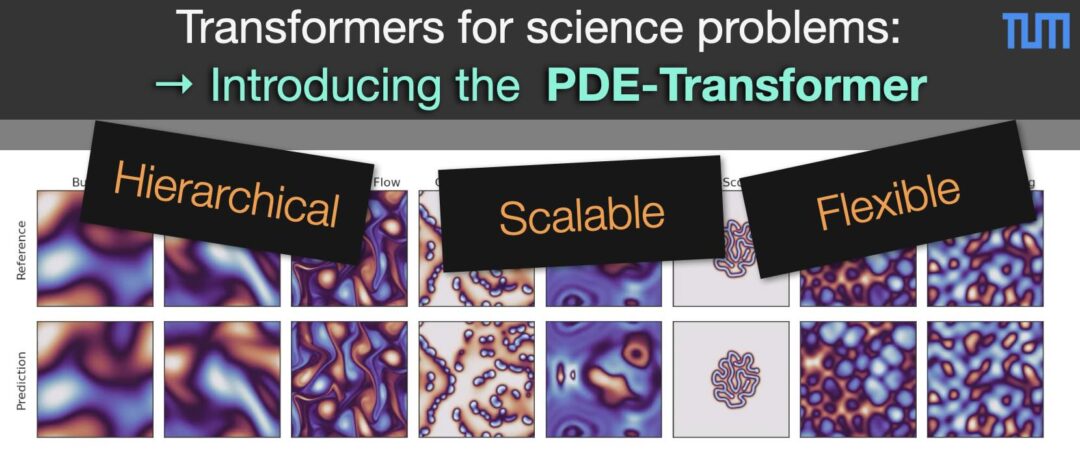

- A Transformer-based architecture tailored for large-scale aerodynamic learning

- Pretraining on diverse geometries (~30k samples) to capture global flow priors

- Task-specific fine-tuning with only a few hundred samples

- Establishing a pathway toward foundation models for engineering CFD

The proposed approach:

- Achieves 1.2% relative error for new geometries (drag coefficient)

- Reduces error by 84% vs training from scratch

- Demonstrates strong generalization to new wing configurations

This work reinforces a central shift: Instead of training task-specific models from scratch each time, we can pretrain once and then adapt the model with a small dataset.